A few days ago (April 30th) it was the 107 year anniversary of the birth of one of my scientific heroes. I meant to write something, but got distracted by the increasing improbability of the statements and claims made by the woke eejits.

The woke, naturally, reject the label ‘woke’ describing it as a dog whistle of those they label the far-right. They, the supreme label ladlers of our age, have one of their usual hissy fits when the tables are turned.

I’m thoroughly sick of their sanctimonious antics, their assertions, their absurd claims that we live in some weird world of intersecting oppressions, guided and influenced at all times and everywhere by invisible matrices of systemic oppressions and privileges, and that the evils of this world can all be traced back to the pernicious influence of whitey.

But isn’t that, one might say, what physicists and mathematicians do? Don’t they construct ephemeral and insubstantial worldviews in which invisible things prance about making our lives difficult? Isn’t dark matter and dark energy just like these woke warblings? I mean, c’mon man, astrophysicists tell us the universe is comprised mostly of this dark stuff. It can’t be seen, can’t (yet) be measured, but is said to influence the motion of galaxies.

Doesn’t that sound exactly like the woke BS?

Perhaps. In defence of astrophysicists, though, at least they’re actually trying to find the bloody stuff1, and are open to, although somewhat resistant to, the possibility they could be completely wrong about it. They are also a little more systematic about their search for its effects.

The woke already have their criterion; if there’s an inequality it’s racism, innit?

I suppose the woke would describe the pernicious effects of racism as being the result of the existence of white matter. I dunno, wouldn’t the world just be so much better2 without whitey in it? There’d be no more murders, no more crime, everyone would be free to be whatever fantastical gender they’ve dreamed up next, and no one would ever go hungry again.

The certainty with which the woke pontificate and state their laughably absurd views of society and history, their absolute unshakeable conviction that they, and they alone, possess the key to utopia, got me thinking again about the whole business of certainty.

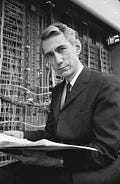

Claude Elwood Shannon3 was born on the 30th April 1916 and died on the 24th February 2001, and he is indisputably one of the towering intellects of the 20th century.

He even looked the part, didn’t he? Shannon put the whole notion of ‘uncertainty’ onto a rigorous and more certain footing. It was a revolution every bit4 as profound as Einstein’s.

I first got interested in all this stuff during my undergraduate days. My final year project was to look at a simple nonlinear stochastic system in which the probability distribution displayed a kind of ‘phase transition’; the shape of the distribution suddenly switched (a quite radical change of shape) as a certain parameter reached a threshold. For reasons lost to the monstrous murk of my memory I decided to try to characterize this by using entropy.

It turned out to be fruitful and not just because I got my first publication out of it, but it set me on a path that would shape the rest of my career.

Shannon started getting interested in the notion of digital processing during his post-graduate days. Some of his thesis work showed how the arrangement of telecom routing relays could be simplified using his notions of digital switching. During the war he worked on cryptography. It was during this time, it was said, that he began to really set about revolutionizing the whole way we think about information and uncertainty. He did meet Alan Turing (who also worked on cryptography during the war) and these two great (and dead, and white) men would doubtless have inspired one another5.

Shannon’s great insight (I should say one of his many great insights) was to realize that information and uncertainty are intimately connected.

Let’s think about this in the context of cryptography. When you encrypt a message what do you do? Well, first off, you code the message mathematically. So, we might represent the message as a sequence of binary digits (bits) like this

We’d then have a key k - represented by another set of binary digits - and we’d use the key to specify a kind of6 mathematical ‘shuffling’ of the message m to produce a ciphertext c, which would also be represented by a sequence of bits.

Symbolically it might be represented like this

We have some encryption function and we feed it the message and the key and it spits out the ciphertext.

The idea is that, without the key, one cannot know (or easily determine) which particular ‘shuffle’ the function has generated.

Shannon set about trying to figure out how to characterize the security of an algorithm. How do we know if something is secure?

Suppose there was some technical expression that characterized uncertainty (we’ll give this the label H - which is the one that is most commonly used) then we’d be able to answer a question like “if I am in possession of the ciphertext, c, then how uncertain am I about the actual message, m, that was sent?”

We’d be looking to maximise this uncertainty - and ideally we’d want this uncertainty to be equal to the uncertainty in the original choice of message. If, for example, your message was 4 bits long, say 1100, then the uncertainty about the message cannot exceed the uncertainty of the original choice. There are 16 possible messages that are 4 bits in length - and what you want to do is to force the ‘attacker’ to be able to do no better than guessing which of those possible messages it is.

In symbolic terms, then, we’d be looking to achieve

where this expression reads : the uncertainty about the message, given knowledge of the ciphertext, is maximum. This is not quite there yet - but I’ll get back to it.

The flip side of this is to think about information. If I have the ciphertext, c, then how much information do I have about the actual message, m, that was sent?

Ideally, we would like it if knowledge of the ciphertext gave NO information about the message. If we wrote I as the symbol for information we could write this requirement symbolically as

Since the idea of encryption is to actually send the message somewhere, we assume that everyone has access to the ciphertext, at least.

All this symbolic jiggery pokery, of course, doesn’t tell us what this technical expression for ‘information’ is, but it does indicate that it might be a very useful thing to have.

Notice that this, also, is very much of the flavour of “reasoning about reasoning” that I discussed a bit in a previous article. If we can construct a meaningful technical definition of information (or uncertainty) then we, might, have the tools to be able to reason in general about the properties of crypto algorithms and their security.

So, what is the relationship between information and uncertainty? How do we firm things up a bit?

Suppose I was sending a message (a sequence of 1’s and 0’s) on some channel and the coding machine was broken. All it did was to send a 1, whatever I put into it. I wouldn’t be able to send any actual information on the channel. I’d be trying to communicate - but there would be no communication possible because I have no choice in what gets sent.

It’s quite hard to get your head round, but without the existence of uncertainty in what message I’m sending, I would not be able to send any information at all.

The receiver, on getting the message 11111 . . . , learns nothing at all about what I was trying to say.

Uncertainty, then, is critical if we want to send information.

Now, here’s the next subtlety. This has nothing whatsoever to do with the meaning of the message. I could write some meaningless drivel (I might, for example, reproduce some of the tenets of Critical Race Theory) but this has no bearing on information in the technical sense.

Information is about the range of possible messages I am able to send - not about whether those messages are meaningful.

This semantic kerfuffle between the technical and common language understanding of the word ‘information’ caused no end of difficulty in the early days of Information Theory.

If you’re wanting to maximise the amount of information that can be sent on a channel, then you need to maximise the uncertainty in the possible messages that can be sent on that channel.

This gives us the way forward. If we’re going to construct a technical expression that allows us to quantify uncertainty then what properties might we require?

Well, we wouldn’t want it to be negative would we?

If there is absolute certainty about something, then the uncertainty is zero. We can’t go any lower than that!

We’d also want this quantity to be additive. If we had 2 uncertain things - and these things were completely independent of one another - then the total uncertainty should just be the sum of the two individual uncertainties.

The way uncertain outcomes are usually characterized in mathematics is to assign probabilities to them. What we want as our measure of uncertainty, then, must be a function of those probabilities.

If we rolled two dice then we usually assume the outcomes on dice 1 are independent of the outcomes on dice 2, and vice versa. If we think of the 2 dice as a single ‘system’ then there are 36 possible outcomes and the probability of getting a 2 on one dice and a 4 on the other dice is just the product of the individual probabilities

I’ve assumed a ‘fair’ dice here.

Our additivity requirement on the uncertainty parameter means that when we have two independent systems like this, the uncertainty parameter must have the property

OK, I appreciate that for many of you this will be a bit of a head scratcher, but the take away here is really that when two systems have nothing whatsoever to do with one another we can add their uncertainties - and, as we’ll see in a moment, the mathematical logarithm function is the only function that can achieve this property.

This requirement is enough to specify that our uncertainty parameter must be logarithmic, and if we add in the requirement that our parameter must be positive (or equal to zero) then we can prove that

which means that our technical uncertainty parameter is proportional to the logarithm of the inverse probability.

Those of you who have taken physics at a higher level will immediately recognize the connection between this and the Boltzmann equation which relates physical entropy, S, to the number (W) of available microstates for a given macrostate

This tantalizing relationship between the world of digital processing and the physics of entropy has sparked all sorts of interesting work.

What intrigues me about the whole ‘information theory’ approach, though, is what it might tell us about correlations. If two things are not independent, if their outcomes are in some way correlated, then their uncertainties do not simply add. In this case, knowledge of one system gives me some ‘information’ about the other. Another way of stating this is to say that knowledge of one system reduces my uncertainty about the other. In this case, then we’d have

which means that our total uncertainty about the joint system is less than or equal to the uncertainty when considering each component on its own. The equality is only achieved when the two systems are not correlated.

In cryptography terms, then, with our message m and our ciphertext c we need to get as close to the following condition as possible

In other words we require that (subsequent) knowledge of the ciphertext does not reduce our initial uncertainty about the message.

Now that we have, thanks to Shannon, a mathematical expression that characterizes uncertainty we can start to use it to prove things - to be able (potentially) to set the operational boundaries required to achieve security. And Shannon’s work has proven to be very useful in all sorts of ways - not just in cryptography.

I know this is quite difficult and subtle stuff - but (a) it’s bloody clever and (b) very useful and (c) yet another extraordinary achievement of a dead white man.

Shannon was awesome.

The woke, meanwhile, are busy digging through the history books to try to figure out whether Shannon’s great grandfather owned slaves.

Unlike, say, Jussie Smollett who had been searching for this all-pervasive hateful white matter to no avail, and had to construct the ‘evidence’ for himself by employing the services of two Nigerian white supremacist brothers Abimbola and Olabinjo Osundairo

We should be careful, though. One theory posits that we need more white people to save the planet. White people reflect the sun more and thus contribute to an increased albedo, ultimately leading to a lowering of global temperatures (no, I’m not being at all serious, in case you were wondering)

A real bona fide dead white man who, so the woke fable goes, couldn’t have contributed anything but oppression and misery to the world

And, yes, the pun was intentional

And even though I probably wouldn’t have understood a word of it I would love to have overheard that conversation.

It’s a bit more than simply ‘shuffling’ the bits in the message. Simple shuffling is known technically as a permutation and is just one component of a typical crypto algorithm.

Dr. Aseem Malhotra, a renowned cardiologist from England, has done much work over the last decade to expose the cholesterol industry and bring awareness to the statin scam. Because of his scientific literacy and charisma, he has succeeded in building bridges toward controversial ideas with many members of the medical and political establishment. Additionally, he is frequently invited by the mainstream media to provide a dissenting voice on controversial topics—both feats very few have pulled off. I thought this interview with Joe Rogan might be of interest.

The Cholesterol question is a good one and one I am interested in as I am a Carnivore, I do not eat any plant material at all. The Cholesterol fable is often quoted to me even by my local butcher, "Your arteries will clog up with all this bacon fat" he says to me when I buy my 80% fat American Bacon and untrimmed Tritip joints (masses of delicious fat) My massive health improvements give me CERTAINTY that this diet is great for me. 🤠👍

Below is all about Covid BS from a very qualified Doctor.

https://www.bitchute.com/video/RgnHBPQV5DNZ/?utm_source=substack&utm_medium=email

There's a certain kind of guy. They stare at you straight out of a photo, revealing a force of intellect and personality, and you immediately think "There is a man who is not to be fucked with".

Professor Shannon was one of those men. What a photo!

Most of my professional career centred around high speed sampling of radar data for target extraction and Doppler processing. And as soon as you say "sampling", you run across Harry Nyquist and Claude Shannon. Funnily enough, I'd never seen pictures of either man until today.

Another fine exposition, Sir. Thank you once again for bringing back many so many memories. Some were fond (getting a new radar technology working back in the late 90s), some not so fond (uni examination rooms), but I wouldnt trade any of them for all the Critic Theory university departments in the entire world. That collected latter group are, in my view, not worth the steam off my piss on a cold morning.