In my previous stack I returned to the question of how the ‘efficacy’ of a vaccine can be manipulated by either (a) ignoring some data or (b) mis-categorizing some data.

This data diddling has been pointed out and examined by many others so I wasn’t pointing out anything new, but I have been trying to approach this from an algebraic perspective to see what insights we could gain, if any. In particular, I wanted to see what impact this diddling might have; would it be a large or small impact and could that be demonstrated algebraically?

As I mentioned in this article, there’s a very interesting result we can see.

To simplify matters I imagined a vaccine schedule such that

those who were going to be vaccinated were vaccinated on day 1

some time later (maybe 3 months, for example) the data was examined

Now, in my view, the only correct way to calculate vaccine efficacy here is to compare those who died in the un-jabbed group with those who died in the jabbed group. This gives an assessment on whether getting jabbed is helpful or not, and to what extent.

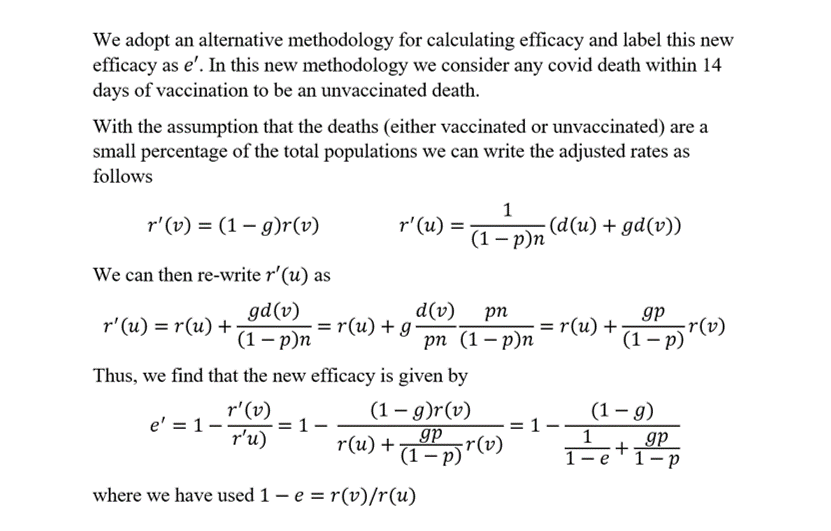

However, we know that in some cases those who died from covid within 14 days of getting jabbed have been categorized as an “unvaccinated” death in official records. I referred to this as “diddle 2”. This methodology leads to a different result for vaccine “efficacy” - one which is higher than the efficacy we get from just doing the unmanipulated comparison. I call this latter efficacy the true efficacy.

What I alluded to in my previous article and want to show today, and it’s a result I haven’t seen before, is that the diddle 2 efficacy actually depends on the percentage of people who have been vaxxed.

If I’ve done the analysis correctly this is a shocking result. It surprised me. It means that simply by vaccinating more people you can improve the apparent “efficacy” of your vaccine by adopting the diddle 2 methodology.

Yes - you read that right. You want to make your vaccine have a higher efficacy? Simple - use the diddle 2 methodology for your calculation of “efficacy” and just vaccinate more people!

I’ll put the analysis in an appendix section - so please do check my working. I don’t think I’ve made a mistake - but an independent check would be helpful. It’s a very simple analysis requiring only a modest amount of algebra.

The results, if right, are somewhat shocking.

What I ended up with is an expression for the “efficacy” you would calculate using diddle 2 methodology. This new, diddled, efficacy, is a function of (it depends on) the true efficacy, the goo factor, and the vaxxed percentage.

The goo factor, g, is defined as the fraction of vaxxed deaths that occur within 14 days of getting the jab.

Here are the charts

You can see here that the true efficacy (in blue) does not depend on the percentage of people you vax (obviously).

You can also see in the 2 charts on the left hand side that even if the true efficacy is zero, the manipulated “efficacy” can be substantial.

So, for example, if you have something like 50% of jabbed covid deaths occurring within 14 days of being jabbed (as we had in Alberta) then even with zero true efficacy you can come up with a figure of around 80% vax “efficacy” if you manage to vaccinate 70% of your population.

That, to me, is astonishing and highlights the serious implications of this kind of data manipulation. I couldn’t tell you whether this manipulation was intentional fraud or done in good faith by people who thought they were doing the right thing (technically). What I can categorically state is that this methodology is utterly flawed if you want to arrive at anything like an accurate figure of vaccine efficacy.

I'm sure this 'misuse' of algebra is now considered a hate crime in Canada.

https://en.m.wikipedia.org/wiki/Omar_Alghabra

I was trying to figure out why, in Pfizer's study of children 6 months-4 years, their vaccinated group was about double the size of their placebo group (prior to unblinding when if I remember correctly 100% of the placebo group was vaccinated). And then they based efficacy on children who contracted Covid 7 days or more after Dose 3. Is this similar data diddling as you discuss above? Obviously, for those of us who live in the real world (and if we were actually dealing with a disease that seriously harmed children), what matters isn't the likelihood that our children will become ill more than 3 months after their first vaccination in the series but the likelihood our children will become ill at any point after that first shot. (Including the period post-unblinding when the placebo group went bye-bye.)